Workshop on urban growth modeling with FUTURES: Difference between revisions

⚠️Dbvanber (talk | contribs) |

⚠️Dbvanber (talk | contribs) |

||

| Line 69: | Line 69: | ||

=== Creating a GRASS database for the tutorial === | === Creating a GRASS database for the tutorial === | ||

You need to create a GRASS database with the Mapset that we will use for the tutorial before we can run the FUTURES model. Please Download the [http://fatra.cnr.ncsu.edu/futures/futures_ncspm_Asheville.zip workshop location]. | You need to create a GRASS database with the Mapset that we will use for the tutorial before we can run the FUTURES model. Please Download the [http://fatra.cnr.ncsu.edu/futures/futures_ncspm_Asheville.zip workshop location], noting where the files are located on your local directory. Now, create (unless you already have it) a directory named <tt>grassdata</tt> (GRASS database) in your home folder (or Documents), unzip the downloaded data into this directory. You should now have a Location <tt>futures_ncspm</tt> in <tt>grassdata</tt>. | ||

* Launch GRASS and display raster, vector data, explore navigation, querying, adding legend, showing metadata | * Launch GRASS and display raster, vector data, explore navigation, querying, adding legend, showing metadata | ||

<center><gallery perrow=3 widths=500 heights=250>Image:GRASS FUTURES startup.png|GRASS GIS 7.0.3 startup dialog with downloaded Location and Mapsets for FUTURES workshop | <center><gallery perrow=3 widths=500 heights=250>Image:GRASS FUTURES startup.png|GRASS GIS 7.0.3 startup dialog with downloaded Location and Mapsets for FUTURES workshop | ||

Revision as of 18:42, 22 March 2016

Workshop introduction

r.futures.* is an implementation of the FUTure Urban-Regional Environment Simulation (FUTURES)[1] which is a model for multilevel simulations of emerging urban-rural landscape structure. FUTURES produces regional projections of landscape patterns using coupled submodels that integrate nonstationary drivers of land change: per capita demand (DEMAND submodel), site suitability (POTENTIAL submodel), and the spatial structure of conversion events (PGA submodel).

[1] Meentemeyer, R. K., Tang, W., Dorning, M. A., Vogler, J. B., Cunniffe, N. J., & Shoemaker, D. A. (2013). FUTURES: multilevel simulations of emerging urban-rural landscape structure using a stochastic patch-growing algorithm. Annals of the Association of American Geographers, 103(4), 785-807

Software

There are a number of software requirements to run FUTURES that will need to be download and installed. Required software includes:

- GRASS GIS 7

- addons

- R (≥ 3.0.2) - needed for r.futures.potential with packages:

- MuMIn, lme4, optparse, rgrass7

- SciPy - useful for r.futures.demand

Installation

We use the R statistical software for our calculation of site suitability (i.e. development potential) in FUTURES. R is a free software environment for statistical computing and graphics. If you don't have R, or you have older an version, please download and install the software from install R and be sure to additionally add these required packages:

install.packages(c("MuMIn", "lme4", "optparse", "rgrass7"))

MS Windows

We will be using GRASS GIS 7.0.3 that can be found here in the OSGeo4W package manager. Please install the latest stable GRASS GIS 7 version and the SciPy Python package using the Advanced install option. A guide with step by step screen shot instructions is available here.

In order for GRASS to be able to find R executables, GRASS must be on the PATH variable. Follow this [solution] to make R accessible from GRASS permanently. A simple but temporary solution is to open GRASS GIS and paste this in the black terminal:

set PATH=%PATH%;C:\Program Files\R\R-3.0.2\bin

This has to be repeated after restarting GRASS session.

Ubuntu Linux

Install GRASS GIS from packages:

sudo add-apt-repository ppa:ubuntugis/ubuntugis-unstable sudo apt-get update sudo apt-get install grass

Workshop data

The data that we will be using for the workshop can be download here: sample dataset. Please place these layers in your local GRASS directory:

- digital elevation model (NED)

- NLCD 2001, 2011

- NLCD 1992/2001 Retrofit Land Cover Change Product

- transportation network (TIGER)

- county boundaries (TIGER)

- protected areas (Secured Lands)

- cities as points (USGS)

In addition, download population information as text files:

- county population past estimates and future projections (NC OSBM) per county (nonspatial)

GRASS GIS introduction

There are many advantages to using the open source GRASS GIS platform, but for many that are more familiar with ARCGis and QGis, the setup and implementation of spatial tools in GRASS can be confusing. Here we provide an overview of the GRASS GIS project: grass.osgeo.org that might be helpful to review if you are a first time users. For this exercise it's not necessary to have a full understanding of how to use GRASS. However, you will need to know how to place your data in the correct GRASS database directory, as well as some basic GRASS functionality. Step by step instructions are provided below

GRASS directory system

GRASS uses unique database terminology and structure (GRASS database) that are important to understand for the set up of this tutorial, as you will need to place the required data (e.g. Mapset) in a specific GRASS database Location.

Important GRASS directory terminology

- A GRASS database consist of directory with specific Locations (projects) where data layer are stored

- Location hold all Mapsets in a specific directoy that have the same spatial projection (spatial reference system)

- Mapset are a collection of maps with the same spatial projection

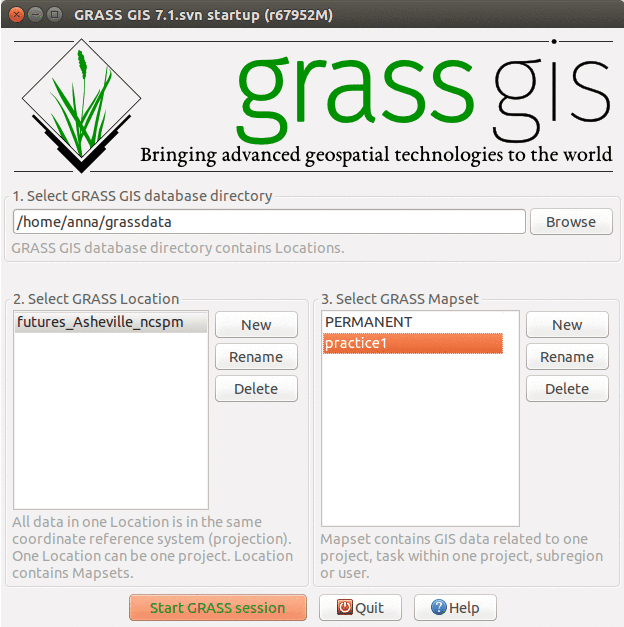

Creating a GRASS database for the tutorial

You need to create a GRASS database with the Mapset that we will use for the tutorial before we can run the FUTURES model. Please Download the workshop location, noting where the files are located on your local directory. Now, create (unless you already have it) a directory named grassdata (GRASS database) in your home folder (or Documents), unzip the downloaded data into this directory. You should now have a Location futures_ncspm in grassdata.

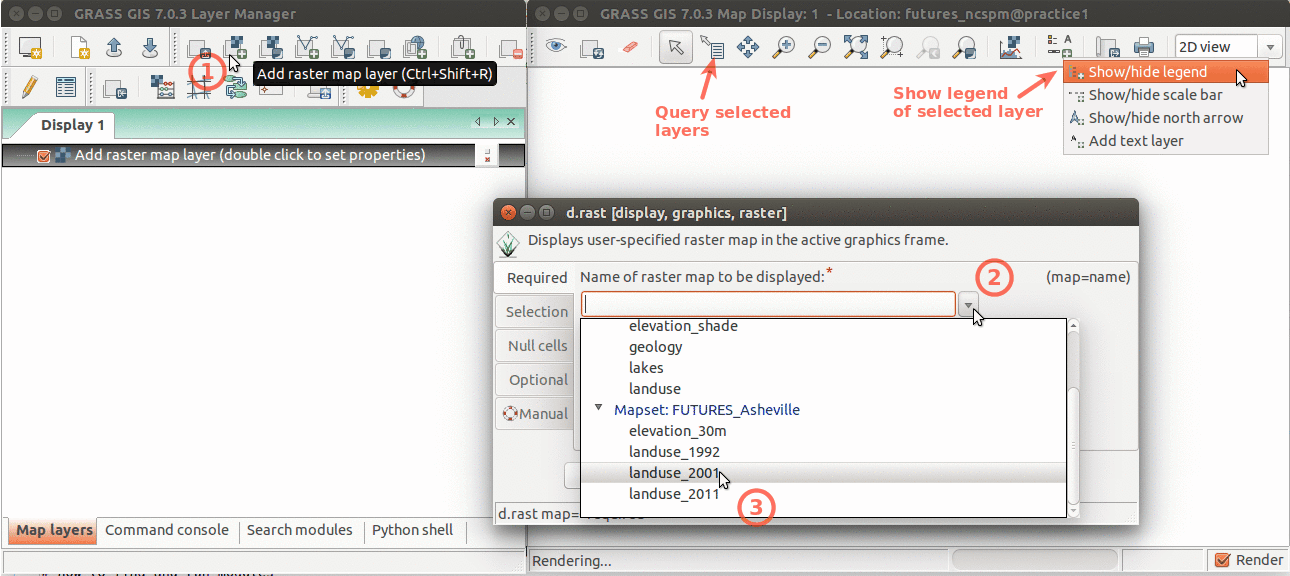

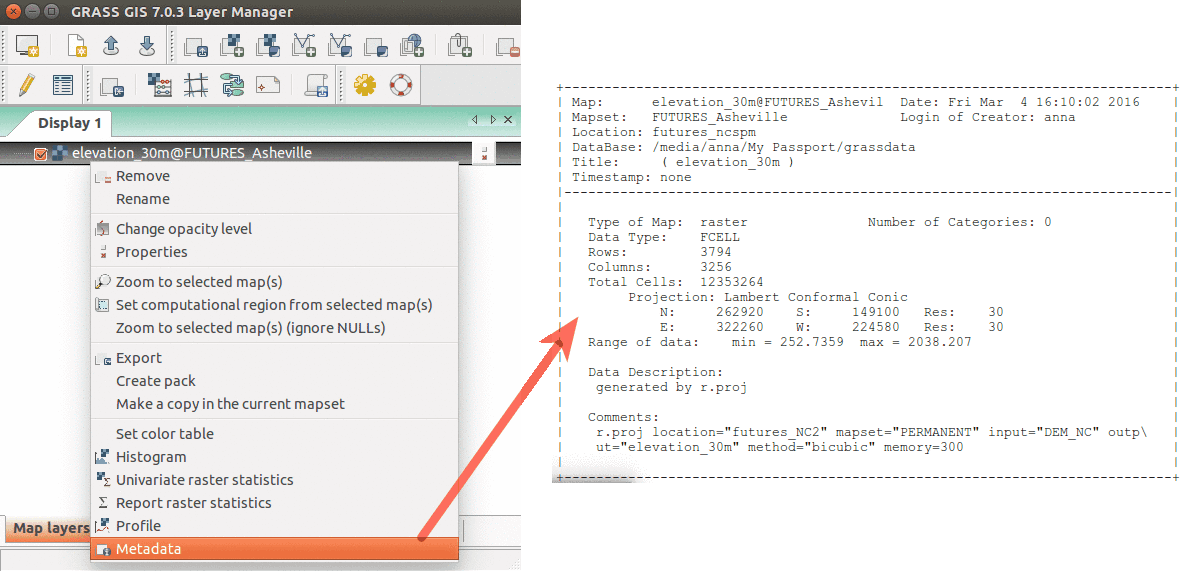

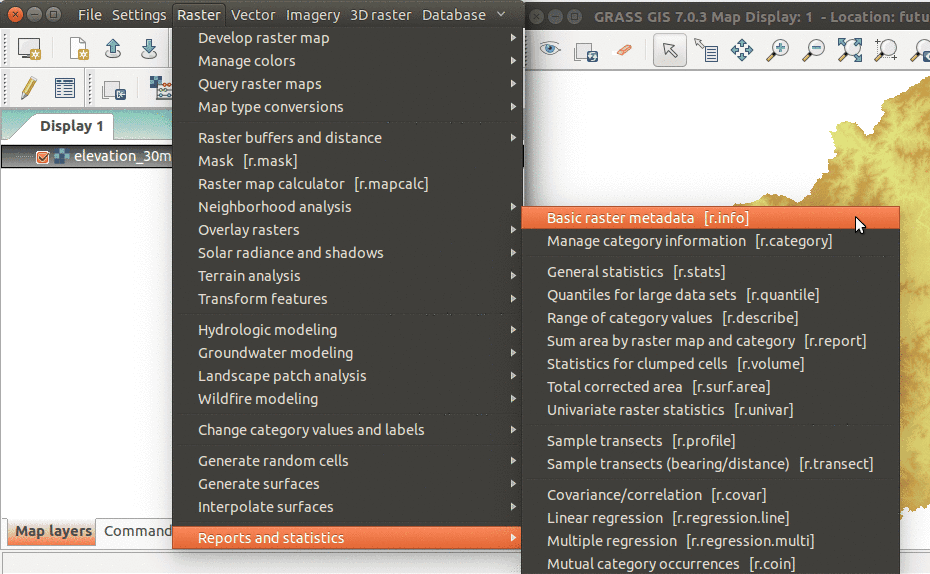

- Launch GRASS and display raster, vector data, explore navigation, querying, adding legend, showing metadata

-

GRASS GIS 7.0.3 startup dialog with downloaded Location and Mapsets for FUTURES workshop

-

Layer Manager and Map Display overview. Annotations show how to add raster layer, query, add legend.

-

Show raster map metadata by right click on layer

- GRASS GIS has over 500 different modules in the core distribution and over 230 addon modules

| Prefix | Function | Example |

|---|---|---|

| r.* | raster processing | r.mapcalc: map algebra |

| v.* | vector processing | v.clean: topological cleaning |

| i.* | imagery processing | i.segment: object recognition |

| db.* | database management | db.select: select values from table |

| r3.* | 3D raster processing | r3.stats: 3D raster statistics |

| t.* | temporal data processing | t.rast.aggregate: temporal aggregation |

| g.* | general data management | g.rename: renames map |

| d.* | display | d.rast: display raster map |

- GRASS modules have GUI and command line interface. In this workshop we use commands to describe the workflow, but we can use both GUI and command line depending on personal preference. Look how GUI and command line interface represent the same tool.

Task: compute aspect (orientation) from provided digital elevation model using module r.slope.aspect using both module dialog and command line. - How to find modules? Modules are organized by their functionality in wxGUI menu, or we can search for them in Search modules tab. If we already know which module to use, we can just type it in the wxGUI command console.

Modules can be found in wxGUI menu

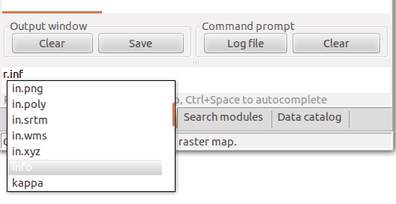

You can search modules by name, description or keywords in Search modules tab

By typing prefix r. we make a list of modules starting with that prefix to show up.

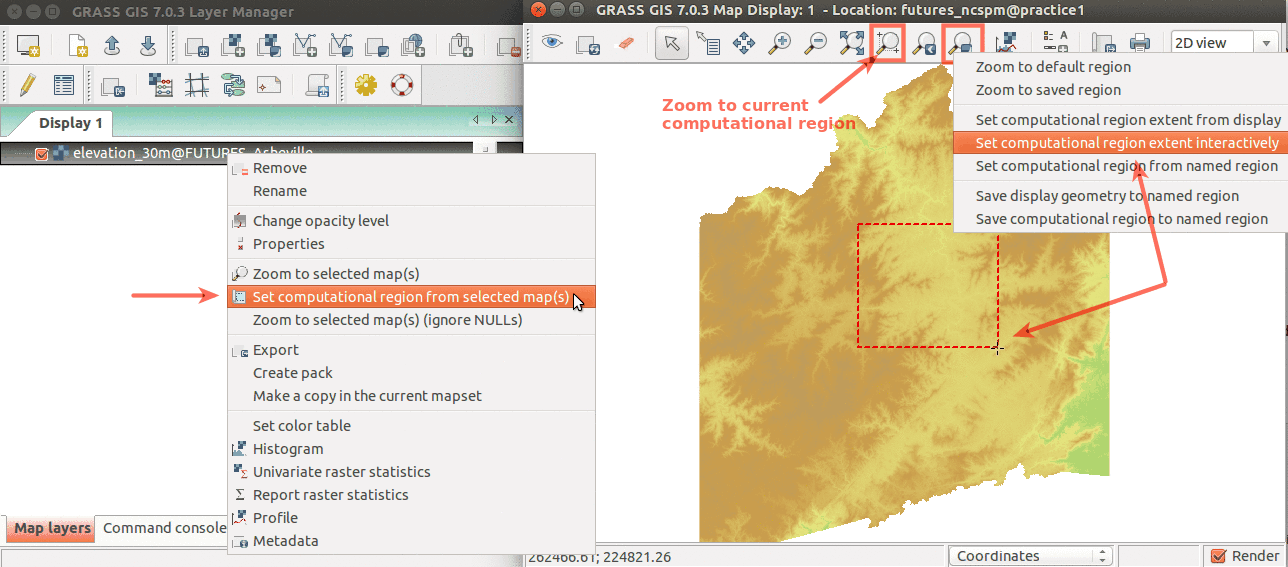

- Computational region - an important raster concept

- defined by region extent and raster resolution

- applies to all raster operations

- persists between GRASS session, can be different for different mapsets

- advantages: keeps your results consistent, avoid clipping, for computationally demanding tasks set region to smaller extent, check your result is good and then set the computational region to the entire study area and rerun analysis

- run

g.region -por in menu Settings - Region - Display region to see current region settings

- GRASS 3D view

Modeling with FUTURES

Initial steps and data preparation

First we will set the computational region of our analyses to an extent covering our study area and aligned with our base landuse rasters:

g.region raster=landuse_2011 -p

We will derive urbanized areas from NLCD dataset for year 1992, 2001 and 2011 by extracting categories category 21 - 24 into a new binary map where developed is 1, undeveloped 0 and NULL (no data) is area unsuitable for development (water, wetlands, protected areas). First we will convert protected areas from vector to raster. We set NULLs to zeros (for simpler raster algebra expression in the next step) using r.null:

v.to.rast input=protected_areas output=protected_areas use=val

r.null map=protected_areas null=0

And then create rasters of developed/undeveloped areas using raster algebra:

r.mapcalc "urban_1992 = if(landuse_1992 >= 21 && landuse_1992 <= 24, 1, if(landuse_1992 == 11 || landuse_1992 >= 90 || protected_areas, null(), 0))"

r.mapcalc "urban_2001 = if(landuse_2001 >= 21 && landuse_2001 <= 24, 1, if(landuse_2001 == 11 || landuse_2001 >= 90 || protected_areas, null(), 0))"

r.mapcalc "urban_2011 = if(landuse_2011 >= 21 && landuse_2011 <= 24, 1, if(landuse_2011 == 11 || landuse_2011 >= 90 || protected_areas, null(), 0))"

We will convert vector counties to raster with the values of the FIPS attribute which links to population file:

v.to.rast input=counties type=area use=attr attribute_column=FIPS output=counties

Before further steps, we will set our working directory so that the input population files and text files we are going to create are saved in one directory and easily accessible. You can do that from menu Settings → GRASS working environment → Change working directory. Select (or create) a directory and move there the downloaded files population_projection.csv and population_trend.csv.

Potential submodel

Module r.futures.potential implements POTENTIAL submodel as a part of FUTURES land change model. POTENTIAL is implemented using a set of coefficients that relate a selection of site suitability factors to the probability of a place becoming developed. This is implemented using the parameter table in combination with maps of those site suitability factors (mapped predictors). The coefficients are obtained by conducting multilevel logistic regression in R with package lme4 where the coefficients may vary by county. The best model is selected automatically using dredge function from package MuMIn.

First, we will derive couple of predictors.

Predictors

Slope

We will derive slope in degrees from digital elevation model using r.slope.aspect module:

r.slope.aspect elevation=elevation_30m slope=slope

Distance from lakes/rivers

First we will extract category Open Water from 2011 NLCD dataset:

r.mapcalc "water = if(landuse_2011 == 11, 1, null())"

Then we compute the distance to water with module r.grow.distance and set color table to shades of blue:

r.grow.distance input=water distance=dist_to_water

r.colors -n map=dist_to_water color=blues

Distance from protected areas

We will use raster protected of protected areas we already created, but we will set NULL values to zero. We compute the distance to protected areas with module r.grow.distance and set color table from green to red:

r.null map=protected_areas setnull=0

r.grow.distance input=protected_areas distance=dist_to_protected

r.colors map=dist_to_protected color=gyr

Forests

We will smooth the transition between forest and other land use, see NLCD category Open Water:

r.mapcalc "forest = if(landuse_2011 >= 41 && landuse_2011 <= 43, 1, 0)"

r.neighbors -c input=forest output=forest_smooth size=15 method=average

r.colors map=forest_smooth color=ndvi

Travel time to cities

Here we will compute travel time to cities (with population > 5000) as cumulative cost distance where cost is defined as travel time on roads. First we specify the speed on different types of roads. We copy the roads raster into our mapset so that we can change it by adding a new attribute field speed. Then we assign speed values (km/h) based on the type of road:

g.copy vector=roads,myroads

v.db.addcolumn map=myroads columns="speed double precision"

v.db.update map=myroads column=speed value=50 where="MTFCC = 'S1400'"

v.db.update map=myroads column=speed value=100 where="MTFCC IN ('S1100', 'S1200')"

Now we rasterize the selected road types using the speed values from the attribute table as raster values.

v.to.rast input=myroads type=line where="MTFCC IN ('S1100', 'S1200', 'S1400')" output=roads_speed use=attr attribute_column=speed

We set the rest of the area to low speed and recompute the speed as time to travel through a 30m cell in minutes:

r.null map=roads_speed null=5

r.mapcalc "roads_travel_time = 1.8 / roads_speed"

Finally we compute the travel time to larger cities using r.cost:

r.cost input=roads_travel_time output=travel_time_cities start_points=cities

r.colors map=travel_time_cities color=byr

Road density

We will rasterize roads and use moving window analysis (r.neighbors) to compute road density:

v.to.rast input=roads output=roads use=val type=line

r.null map=roads null=0

r.neighbors -c input=roads output=road_dens size=25 method=average

Distance to interchanges

We will consider TIGER roads of type Ramp as interchanges, rasterize them and compute euclidean distance to them:

v.to.rast input=roads type=line where="MTFCC = 'S1630'" output=interchanges use=val

r.grow.distance -m input=interchanges distance=dist_interchanges

Development pressure

We compute development pressure with r.futures.devpressure. Development pressure is a predictor based on number of neighboring developed cells within search distance, weighted by distance. The development pressure variable plays a special role in the model, allowing for a feedback between predicted change and change in subsequent steps.

r.futures.devpressure -n input=urban_2011 output=devpressure_2 method=gravity size=7 gamma=2 scaling_factor=10

r.futures.devpressure -n input=urban_2011 output=devpressure_1 method=gravity size=10 gamma=1 scaling_factor=1

r.futures.devpressure -n input=urban_2011 output=devpressure_0_5 method=gravity size=30 gamma=0.5 scaling_factor=0.1

When gamma increases, development influence decreases more rapidly with distance. Size is half the size of the moving window. When gamma is low, local development influences more distant places. We will derive 3 layers with different gamma and size parameters for the potential statistical model.

Rescaling variables

First we will look at the ranges of our predictor variables by running a short Python code snippet in Python shell:

for name in ['slope', 'dist_to_water', 'dist_to_protected', 'forest_smooth', 'travel_time_cities', 'road_dens', 'dist_interchanges', 'devpressure_0_5', 'devpressure_1']:

minmax = grass.raster_info(name)

print name, minmax['min'], minmax['max']

We will rescale some of our input variables:

r.mapcalc "dist_to_water_km = dist_to_water / 1000"

r.mapcalc "dist_to_protected_km = dist_to_protected / 1000"

r.mapcalc "dist_interchanges_km = dist_interchanges / 1000"

r.mapcalc "road_dens_perc = road_dens * 100"

r.mapcalc "forest_smooth_perc = forest_smooth * 100"

Sampling

To sample only in the analyzed counties, we will clip development layer:

r.mapcalc "urban_change_01_11 = if(urban_2011 == 1, if(urban_2001 == 0, 1, null()), 0)"

r.mapcalc "urban_change_clip = if(counties, urban_change_01_11)"

To estimate the number of sampling points, we can use r.report to report number of developed/undeveloped cells and their ratio.

r.report map=urban_change_clip units=h,c,p

We will sample the predictors and the response variable with 5000 random points in undeveloped areas and 1000 points in developed area:

r.sample.category input=urban_change_clip output=sampling sampled=counties,devpressure_0_5,devpressure_1,devpressure_2,slope,road_dens_perc,forest_smooth_perc,dist_to_water_km,dist_to_protected_km,dist_interchanges_km,travel_time_cities npoints=5000,1000

The attribute table can be exported as CSV file (not necessary step):

v.db.select map=sampling columns=urban_change_clip,counties,devpressure_0_5,devpressure_1,slope,road_dens_perc,forest_smooth_perc,dist_to_water_km,dist_to_protected_km,dist_interchanges_km,travel_time_cities separator=comma file=samples.csv

Development potential

Now we find best model for predicting urbanization using r.futures.potential which wraps an R script.

We can run R dredge function to find "best" model. We can specify minimum and maximum number of predictors the final model should use.

r.futures.potential -d input=sampling output=potential.csv columns=devpressure_1,slope,road_dens_perc,forest_smooth_perc,dist_to_water_km,dist_to_protected_km,dist_interchanges_km,travel_time_cities developed_column=urban_change_clip subregions_column=counties min_variables=4

Also, we can play with different combinations of predictors, for example:

r.futures.potential input=sampling output=potential.csv columns=devpressure_2,road_dens_perc,slope,dist_interchanges_km,dist_to_water_km developed_column=urban_change_clip subregions_column=counties --o

r.futures.potential input=sampling output=potential.csv columns=devpressure_1,road_dens_perc,slope,dist_interchanges_km,dist_to_water_km developed_column=urban_change_clip subregions_column=counties --o

...

You can then open the output file potential.csv, which is a CSV file with tabs as separators.

For this tutorial, the final potential is created with:

r.futures.potential input=sampling output=potential.csv columns=devpressure_2,road_dens_perc,slope,dist_interchanges_km,dist_to_water_km developed_column=urban_change_clip subregions_column=counties --o

We can now visualize the suitability surface using module r.futures.potsurface. It creates initial development potential (suitability) raster from predictors and model coefficients and serves only for evaluating development potential model. The values of the resulting raster range from 0 to 1.

r.futures.potsurface input=potential.csv subregions=counties output=suitability

r.colors map=suitability color=byr

Demand submodel

First we will mask out roads so that they don't influence into per capita land demand relation.

v.to.rast input=roads type=line output=roads_mask use=val

r.mask roads_mask -i

We will use r.futures.demand which derives the population vs. development relation. The relation can be linear/logarithmic/logarithmic2/exponential/exponential approach. Look for examples of the different relations in the manual.

- linear: y = A + Bx

- logarithmic: y = A + Bln(x)

- logarithmic2: y = A + B * ln(x - C) (requires SciPy)

- exponential: y = Ae^(BX)

- exp_approach: y = (1 - e^(-A(x - B))) + C (requires SciPy)

The format of the input population CSV files is described in the manual. It is important to have synchronized categories of subregions and the column headers of the CSV files (in our case FIPS number). How to simply generate the list of years (for which demand is computed) is described in r.futures.demand manual, for example run this in Python console:

','.join([str(i) for i in range(2011, 2036)])

Then we can create the DEMAND file:

r.futures.demand development=urban_1992,urban_2001,urban_2011 subregions=counties observed_population=population_trend.csv projected_population=population_projection.csv simulation_times=2011,2012,2013,2014,2015,2016,2017,2018,2019,2020,2021,2022,2023,2024,2025,2026,2027,2028,2029,2030,2031,2032,2033,2034,2035 plot=plot_demand.pdf demand=demand.csv separator=comma

In your current working directory, you should find files plot_demand.png and demand.csv.

If you have SciPy installed, you can experiment with other methods for fitting the functions:

r.futures.demand ... method=logarithmic2

r.futures.demand ... method=exp_approach

If necessary, you can create a set of demand files produced by fitting each method separately and then pick for each county the method which seems best and manually create a new demand file.

When you are finished, remove the mask as it is not needed for the next steps.

r.mask -r

Patch calibration

Patch calibration can be a very time consuming computation. Therefore we select only one county (Buncombe):

v.to.rast input=counties type=area where="FIPS == 37021" use=attr attribute_column=FIPS output=calib_county

g.region raster=calib_county zoom=calib_county

to derive patches of new development by comparing historical and latest development. We can keep only patches with minimum size 2 cells (1800 = 2 x 30 x 30 m).

r.futures.calib development_start=urban_1992 development_end=urban_2011 subregions=counties patch_sizes=patches.txt patch_threshold=1800 -l

We obtained a file patches.txt (used later in the PGA) - a patch size distribution file - containing sizes of all found patches.

We can look at the distribution of the patch sizes:

from matplotlib import pyplot as plt

with open('patches.txt') as f:

patches = [int(patch) for patch in f.readlines()]

plt.hist(patches, 2000)

plt.xlim(0,50)

plt.show()

At this point, we start the calibration to get best parameters of patch shape (for this tutorial, this step can be skipped and the suggested parameters are used).

r.futures.calib development_start=urban_1992 development_end=urban_2011 subregions=calib_county patch_sizes=patches.txt calibration_results=calib.csv patch_threshold=1800 repeat=5 compactness_mean=0.1,0.3,0.5,0.7,0.9 compactness_range=0.05 discount_factor=0.1,0.3,0.5,0.7,0.9 predictors=road_dens_perc,forest_smooth_perc,dist_to_protected_km demand=demand.csv devpot_params=potential.csv num_neighbors=4 seed_search=2 development_pressure=devpressure_0_5 development_pressure_approach=gravity n_dev_neighbourhood=30 gamma=0.5 scaling_factor=0.1

FUTURES simulation

We will switch back to our previous region:

g.region raster=landuse_2011 -p

Now we have all the inputs necessary for running r.futures.pga:

The entire command is here:

r.futures.pga subregions=counties developed=urban_2011 predictors=slope,forest_smooth_perc,dist_interchanges_km,travel_time_cities devpot_params=potential.csv development_pressure=devpressure_1 n_dev_neighbourhood=10 development_pressure_approach=gravity gamma=1 scaling_factor=1 demand=demand.csv discount_factor=0.3 compactness_mean=0.2 compactness_range=0.1 patch_sizes=patches.txt num_neighbors=4 seed_search=2 random_seed=1 output=final output_series=final

Quick description

The command parameters and their values:

- raster map of counties with their categories

... subregions=counties ...

- raster of developed (1), undeveloped (0) and NULLs for undevelopable areas

... developed=urban_2011 ...

- predictors selected with r.futures.potential and their coefficients in a potential.csv file. The order of predictors must match the order in the file and the categories of the counties must match raster counties.

... predictors=slope,forest_smooth_perc,dist_interchanges_km,travel_time_cities devpot_params=potential.csv ...

- initial development pressure computed by r.futures.devpressure, it's important to set here the same parameters

... development_pressure=devpressure_1 n_dev_neighbourhood=10 development_pressure_approach=gravity gamma=1 scaling_factor=1 ...

- per capita land demand computed by r.futures.demand, the categories of the counties must match raster counties.

... demand=demand.csv ...

- patch parameters from the calibration step

... discount_factor=0.3 compactness_mean=0.4 compactness_range=0.08 patch_sizes=patches.txt ...

- recommended parameters for patch growing algorithm

... num_neighbors=4 seed_search=2 ...

- set random seed for repeatable results or set flag -s to generate seed automatically

... random_seed=1 ...

- specify final output map and optionally basename for intermediate raster maps

output=final output_series=final

Scenarios

Scenarios involving policies that encourage infill versus sprawl can be explored using the incentive_power parameter of r.futures.pga, which uses a power function to transform the evenness of the probability gradient in POTENTIAL. You can change the power to a number between 0.25 and 4 to test urban sprawl/infill scenarios. Higher power leads to infill behavior, lower power to urban sprawl.

Postprocessing

Follow a tutorial how to make an animation from the results.